Last Updated on April 10, 2026 by Vivekanand

In Part 5 of this series, we watched the compiler backend translate platform-independent LLVM IR into target-specific machine instructions. But we deliberately sidestepped the hardest problem in the entire backend: register allocation in compilers. LLVM IR hands the backend thousands of virtual registers — %1, %2, %3, stretching into the hundreds for any non-trivial function. Meanwhile, your x86-64 CPU offers exactly 16 general-purpose registers. ARM64 is more generous with 31, but it is still a finite resource constrained by strict calling convention rules.

This is the fundamental tension at the heart of register allocation in compilers: how do you map an unbounded set of variables onto a brutally limited set of physical storage locations, while keeping your program as fast as possible? Get it wrong, and variables spill to the stack — RAM that is orders of magnitude slower than a register. Get it right, and your code flies.

Table of Contents

1. The Register Allocation Problem in Compilers

Before diving into algorithms, let’s understand exactly why register allocation in compilers is such a difficult problem. It is not a simple matter of assigning names — several constraints make this a deeply challenging optimization puzzle.

Register Classes Are Separate Pools

A CPU does not have one monolithic set of registers. Instead, registers are split into distinct classes that serve different purposes. On x86-64, you have 16 general-purpose registers (RAX through R15), 16 SSE/AVX floating-point registers (XMM0–XMM15, or 32 with AVX-512), and various special-purpose registers. On ARM64, you get 31 general-purpose registers (X0–X30) and 32 SIMD/FP registers (V0–V31). The register allocator must respect these boundaries — an integer variable cannot be placed in a floating-point register.

Calling Conventions Shrink the Available Pool

Not all registers are freely available to the allocator. As we explored in Assembly Part 2: Calling Conventions, the ABI splits registers into caller-saved (volatile) and callee-saved (non-volatile) categories. Caller-saved registers like RDI and RSI on x86-64 are destroyed across function calls, so any value in them must be saved before a call instruction. Callee-saved registers like RBX and R12–R15 persist across calls, but using them forces the compiler to insert save/restore code in the function prologue and epilogue.

The following table summarizes the key differences between the two major architectures’ register files and how they impact register allocation in compilers:

| Feature | x86-64 | ARM64 |

|---|---|---|

| General-Purpose Registers | 16 (RAX–R15) | 31 (X0–X30) |

| FP / SIMD Registers | 16 (XMM0–15) / 32 (AVX-512) | 32 (V0–V31) |

| Caller-Saved (Volatile) | RAX, RDI, RSI, RDX, RCX, R8–R11 | X0–X15 |

| Callee-Saved (Non-Volatile) | RBX, RBP, R12–R15 | X19–X28 |

| Link Register | ❌ (return address on stack) | X30 (LR) — avoids stack write for leaf functions |

ARM64’s nearly double-sized register file gives the compiler significantly more room to work with. This architectural advantage means ARM64 code inherently suffers fewer register spills for the same source code — a direct consequence of RISC philosophy prioritizing a large, uniform register set.

2. Liveness Analysis — When Are Variables Alive?

Before the compiler can assign physical registers, it must answer a fundamental question for every variable: when is this variable alive? A variable is considered live from the point where it is first defined (written) to the point where it is last used (read). This span is called its live range or live interval.

The reason live ranges matter is simple: if two variables are alive at the same time, they interfere — they cannot share the same physical register. If variable v1 is alive from instruction 1 to instruction 5, and variable v2 is alive from instruction 3 to instruction 7, then both are alive simultaneously during instructions 3–5. They must be placed in different registers.

Consider this small function and its corresponding live ranges:

int example(int a, int b, int c) {

int v1 = a + 1; // Instruction 1: v1 defined

int v2 = b + 2; // Instruction 2: v2 defined

int v3 = v1 + v2; // Instruction 3: v1, v2 used; v3 defined

int v4 = c + 3; // Instruction 4: v4 defined

int v5 = v3 + v4; // Instruction 5: v3, v4 used; v5 defined

return v5; // Instruction 6: v5 used

}The live range timeline for each variable looks like this:

Instruction: 1 2 3 4 5 6

─────────────────────────────────────────

v1: ├────────┤

v2: ├───┤

v3: ├────────┤

v4: ├───┤

v5: ├───┤From this diagram, we can extract the interference relationships. Variables v1 and v2 overlap during instructions 2–3, so they interfere. Variables v3 and v4 overlap during instructions 4–5, so they also interfere. However, v1 and v4 never overlap — they could potentially share the same register. This observation is the key insight that drives the next step: building an interference graph.

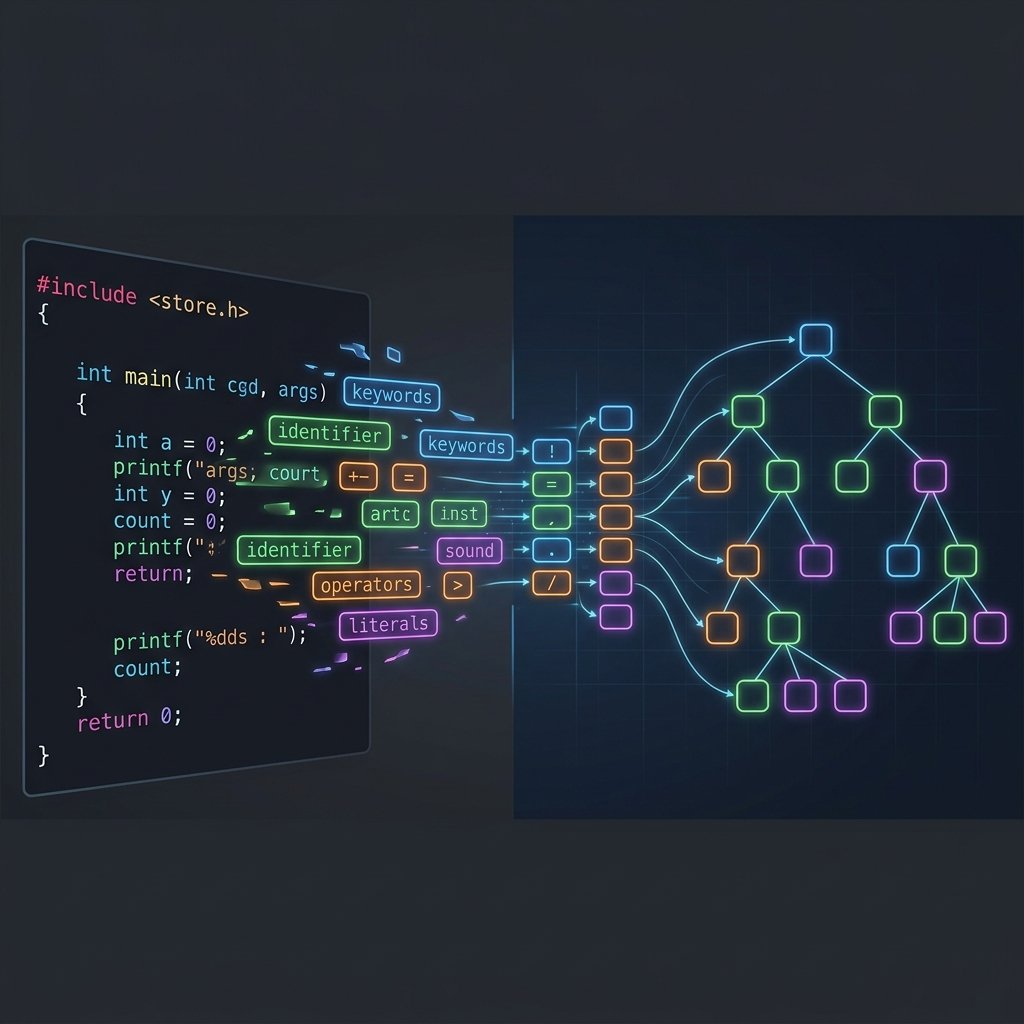

Liveness analysis is performed by iterating backward through each basic block, tracking which variables are used before being redefined. In LLVM, the LiveIntervals analysis pass builds these intervals for every virtual register in the function, forming the foundation for the register allocation compiler phase.

3. Register Allocation via Graph Coloring

The classic academic approach to register allocation in compilers models the problem as graph coloring. The idea is elegant: build a graph where each node represents a variable’s live range, and draw an edge between any two nodes that interfere (are alive at the same time). Then, color this graph with K colors, where K equals the number of available physical registers. If you can color the graph so that no two adjacent nodes share a color, you have a valid register assignment.

Using our example from above, the interference graph looks like this:

Interference Graph (K = 3 registers available)

v1 ──── v2 Interfering pairs:

• v1 ↔ v2 (both live at instructions 2–3)

v3 ──── v4 • v3 ↔ v4 (both live at instructions 4–5)

v5 No other overlaps — see below.

Coloring solution (only 2 registers needed):

v1 → R1 v2 → R2 v3 → R1 v4 → R2 v5 → R1Notice that v1 and v3 receive the same color (R1) even though v3 = v1 + v2 reads v1 at the same instruction where v3 is defined. This works because the read of v1 completes before the write to v3 — the old value is consumed before the new one takes the register. The same logic applies at instruction 5: v4 and v5 share R1 because v4’s read finishes before v5’s write. With just 2 registers, we have successfully allocated 5 variables — no spills needed. Having 3 available registers means even more headroom for real-world functions.

Why K-Coloring Is NP-Hard

In theory, graph coloring for K ≥ 3 is NP-complete — no known polynomial-time algorithm can solve all instances. However, Chaitin’s algorithm (1981) uses a clever heuristic that works remarkably well in practice: find a node with fewer than K edges, remove it from the graph (push it onto a stack), and repeat. If you get stuck — every remaining node has K or more edges — you must spill one of them. After simplification, pop nodes off the stack and assign colors greedily. This simplify-select loop produces near-optimal results for the structured interference graphs that real programs generate.

4. LLVM’s Greedy Register Allocator

While graph coloring is the textbook approach, LLVM’s production register allocation compiler pass uses a more sophisticated algorithm called RAGreedy — the Greedy Register Allocator. It is the default allocator for all optimized builds and is designed for the messy realities of production code generation.

Priority Queue and Spill Weights

Instead of processing live intervals in a fixed order, RAGreedy places all virtual register intervals into a priority queue. The priority is determined by the spill weight — a metric calculated from use density, loop nesting depth, and interval size. High-weight intervals (frequently used variables inside hot loops) are allocated first, ensuring they get the best registers. Low-weight intervals (rarely used variables in cold paths) are allocated last and are the first candidates for spilling if pressure is high.

Live Range Splitting — The Secret Weapon

This is where RAGreedy truly shines. When an interval cannot fit into any available register, the allocator does not immediately spill the entire variable. Instead, it attempts to split the live range into smaller sub-intervals. Each sub-interval can then be assigned to a different physical register, or only the cold portions are spilled while the hot-loop portion stays in a register. This is a global operation that works across basic block boundaries — something the classic graph coloring approach struggles with.

Eviction

When the allocator encounters a conflict, it evaluates whether to evict an existing assignment. If the current interval has a higher spill weight than the occupant of a desired register, the allocator kicks out the lower-priority interval and re-enqueues it. This allows globally better decisions — a high-priority loop variable can claim a register from a lower-priority variable that was allocated earlier.

LLVM also provides alternative allocators for different use cases. The -regalloc=fast allocator is used for -O0 debug builds — it is extremely fast but produces poor code. The -regalloc=basic allocator is a simple educational implementation. You can switch between them using llc or Clang’s -mllvm flag to observe the difference in output quality. More details on LLVM’s backend architecture can be found in the official LLVM Code Generator documentation.

5. Spilling and Rematerialization — When Registers Run Out

No matter how clever the allocator is, sometimes there simply are not enough registers. When the number of simultaneously live variables exceeds the physical register count, the compiler must spill — store a register’s value to a stack slot in memory and reload it later when needed.

The Cost of Spilling

Spilling inserts two types of instructions into your code. A store (spill) writes the register value to the stack: mov [rbp-8], rax on x86-64, or str x0, [sp, #16] on ARM64. A load (reload) fetches it back: mov rax, [rbp-8] or ldr x0, [sp, #16]. Even when the data hits L1 cache (~4 cycles latency), this is infinitely slower than a register access (0 cycles — the value is already in the execution pipeline). Heavy spilling can turn a tight inner loop into a memory-bound bottleneck.

If you have read Assembly Part 3: Stack Frames, you know that a function’s stack frame grows to accommodate local variables. Spilled registers are precisely the reason those stack frames expand — every spill slot adds 8 bytes (on a 64-bit architecture) to the frame size, which the prologue must reserve via sub rsp, N or sub sp, sp, #N.

Rematerialization — The Antidote to Spilling

For certain values, the compiler has a smarter option than spilling: rematerialization. Instead of paying the cost of a store and a load, the compiler simply recomputes the value wherever it is needed. This works for values that are cheap to recalculate — constants, frame pointer offsets, simple arithmetic like x + 1, or addresses derived from global variables.

Consider a constant like 42. Spilling it would require a store and a load (two memory operations). Rematerializing it requires a single mov eax, 42 or mov w0, #42 — a 1-cycle operation that uses zero memory bandwidth. LLVM’s RAGreedy automatically identifies rematerializable values during the allocation process, preferring rematerialization over spilling whenever the recomputation cost is lower than the memory access cost.

6. Register Allocation in Action: -O0 vs -O2 on Godbolt

The best way to understand register allocation in compilers is to see it yourself. Let’s take a function with deliberately high register pressure — 8 parameters and 8 intermediate variables — and compare how the compiler handles it at different optimization levels.

int heavy_compute(int a, int b, int c, int d,

int e, int f, int g, int h) {

int v1 = a * b + c;

int v2 = d - e + f;

int v3 = g * h - a;

int v4 = b + c * d;

int v5 = e - f * g;

int v6 = h + a - b;

int v7 = c * d + e;

int v8 = f - g + h;

return v1 + v2 + v3 + v4 + v5 + v6 + v7 + v8;

}At -O0: Stack Spill City

Compile this with gcc -O0 or clang -O0 on x86-64, and you will see a massive wall of mov instructions to and from the stack. Every single parameter and local variable gets its own stack slot. The compiler does not even attempt to keep values in registers between operations — it writes each intermediate result to memory and reloads it immediately before the next use. This is -regalloc=fast in action: maximum debuggability, minimum performance.

You will see output resembling this pattern:

# x86-64, -O0: every variable lives on the stack

mov dword ptr [rbp - 4], edi # spill parameter 'a'

mov dword ptr [rbp - 8], esi # spill parameter 'b'

mov dword ptr [rbp - 12], edx # spill parameter 'c'

...

mov eax, dword ptr [rbp - 4] # reload 'a'

mov ecx, dword ptr [rbp - 8] # reload 'b'

imul eax, ecx # multiply a * b

mov ecx, dword ptr [rbp - 12] # reload 'c'

add eax, ecx # add 'c'

mov dword ptr [rbp - 36], eax # spill v1 to stackEvery operation involves a round-trip to memory. The stack frame for this simple function balloons to 60+ bytes.

At -O2: Registers Only

Now compile the same function with -O2. The output is dramatically different. The greedy allocator performs liveness analysis, discovers that most variables’ live ranges do not overlap, and packs everything into registers. On x86-64, you will typically see the entire computation happen in registers like eax, ecx, edx, and r8d–r10d with zero stack spills. On ARM64, the function is even cleaner — with 31 GPRs available, every variable fits comfortably in registers with room to spare.

Try it yourself on Compiler Explorer — click the links below to see each scenario live:

Interactive: x86-64 at -O0 — Stack Spills Everywhere

Interactive: x86-64 at -O2 — Registers Only, Zero Spills

Interactive: x86-64 vs ARM64 at -O2 — The Register File Advantage

The Cross-Platform Register Advantage

The x86-64 vs ARM64 comparison at -O2 is particularly revealing for understanding register allocation in compilers. With 8 function parameters, x86-64’s System V ABI passes the first 6 in registers (RDI through R9) and pushes parameters 7 and 8 onto the stack. The register allocation compiler pass must then reload those two values from memory. ARM64’s AAPCS, by contrast, passes all 8 parameters in registers (X0–X7) — no stack access needed at all. This is a tangible example of how CPU architecture directly shapes the quality of the compiler’s register allocation output.

7. Practical Tips: Helping the Register Allocator

While the register allocator is highly automated, there are coding patterns that can make its job easier — or harder.

Reduce live range overlap. Define variables close to where they are used, and avoid hoisting definitions to the top of a function. The shorter a variable’s live range, the less likely it is to interfere with other variables.

Minimize variables live across function calls. Any variable that is alive across a call instruction must be saved in a callee-saved register or spilled. If you can restructure code so that expensive computations happen after a call rather than before, you reduce the number of values the allocator needs to preserve.

Trust the optimizer. Manually “optimizing” C code by reusing variables (e.g., temp = a; ... temp = b;) does not help — it actually hurts. The compiler works best with SSA-style code where each variable has a single, clear definition. Let the optimizer decide what to merge.

Profile before you worry. Modern allocators like RAGreedy handle register pressure remarkably well. Unless profiling reveals spilling in hot loops, the allocator is almost certainly making better decisions than manual intervention would. Link-time optimization (Part 4: Optimization Passes) can further reduce pressure through cross-module inlining, which eliminates function call boundaries and their associated register save/restore costs.

8. Conclusion

Register allocation in compilers sits at the intersection of mathematical elegance and brutal hardware reality. The compiler must solve what is essentially an NP-hard graph coloring problem for every function, constrained by ABI rules, register class boundaries, and the performance imperative to minimize stack spills. LLVM’s greedy allocator tackles this with sophisticated heuristics — priority-based allocation, live range splitting, eviction, and rematerialization — producing code that keeps your data where the CPU can access it fastest: in registers.

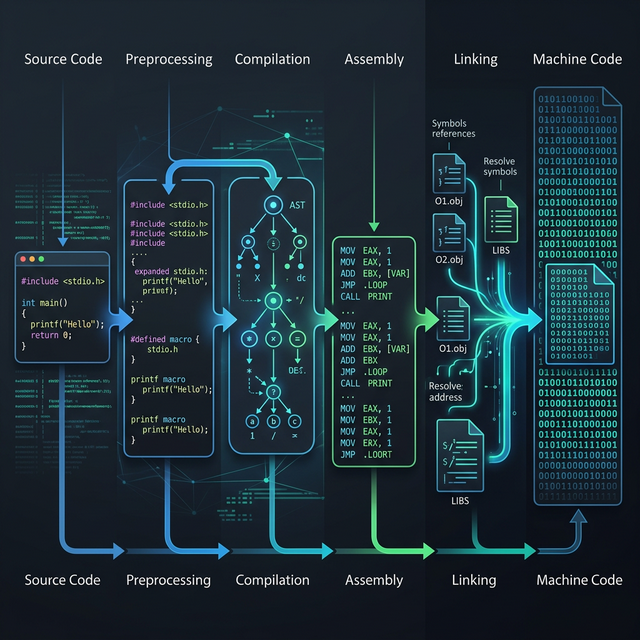

We have now traced the complete journey through the compiler backend. From instruction selection and scheduling in Part 5, through register allocation here in Part 6, your C code has been transformed from abstract IR into fully realized machine instructions with physical register assignments and stack frame layouts. But these instructions still live in isolated object files. In Part 7: Linking Revisited, we will examine how the linker stitches these object files together into a final executable — resolving symbols, merging sections, and producing the binary your operating system loads into memory.